The emergence of Artificial Intelligence (AI), particularly generative AI, has sent shockwaves through various sectors including academia. While some have embraced its potential with open arms, others remain hesitant and reluctant about its integration at their workplace. It’s widely acknowledged that the efficacy of generative AI is significantly dependent on the quality of the training data. However, a notable concern persists due to the lack of transparency surrounding the origin of data being used to train the large language models (LLMs). Developers frequently work with a considerable degree of secrecy, leaving stakeholders in academia unsure about the origins and methodologies utilized during the training process. This opacity raises valid concerns regarding data security and ethics not only in academia but also in corporate medical and scientific communication agencies.

In the absence of robust policies for AI with respect to the training of large language models, it’s evident that the publication industry is polarized within two factions.

On one side, there exists a group of individuals and organizations who are willing to collaborate with generative AI model developers and believe in the power of working together to design an efficient system for processing and generating content. On the other hand, there is another set who opposes the idea of their data being used for training AI models and rightly so. They harbor a concern about the potential misuse of their client’s (in this case authors, researchers, scientists) data being used. This group is adamant about safeguarding their data from being exploited without proper consent. Each faction has its own set of reasons for its stance on this issue.

Click Here:- Enhancing Your Manuscript: Essential Tools and Tips for Medical Writers

Individuals within the group advocating collaboration with generative AI see a broader potential for such collaboration. They believe it could simplify researchers’ lives by providing access to a wealth of high-quality open, licensed, and proprietary content and data. They believe that well-trained LLMs could speed up accessing vast scholarly content, ultimately aiding the academic, research, and scientific community in achieving its objectives efficiently. Moreover, they are of the opinion that embracing generative AI in alignment with academic standards not only fosters efficiency but also maintains integrity within the research process. By adhering to ethical guidelines and respecting intellectual property rights, this collaborative approach ensures that advancements in AI technology serve to enrich scholarly endeavors rather than compromise them.

Conversely, the group advocating for data protection raises a plethora of concerns regarding the data utilization in training LLMs. They perceive generative AI models are engaging in what they term as “AI mining” of important research data. Their apprehension revolves around the potential for copyright infringement and unauthorized use of their data without consent or compensation. These individuals raise their concerns about the lack of control over how their data is being used for training LLMs, emphasizing the need for stringent laws to protect intellectual property rights. Moreover, there is ongoing discourse regarding the accessibility of the data used for LLM training, with some asserting that while certain datasets may be openly available online, access might be restricted to abstracts rather than full-text articles.

The crux of their argument lies in the assertion that the current practices surrounding AI data usage in publishing lack transparency and fail to adequately address the ethical implications of utilizing proprietary data for training purposes.

Another significant concern revolves around the absence of standardized guidelines and global laws pertaining to AI model training. As a result, publishers are advocating for comprehensive regulations to ensure transparency regarding the data usage for training AI models and to uphold ethical data practices. This demand reiterates the urgent need for clear and robust guidelines to govern the ethical usage of data in AI development, addressing concerns including transparency and accountability.

While the publishers express concern regarding data mining, AI model developers offer a contrasting perspective. They acknowledge the utilization of openly available online data to train AI models but emphasize that the generated outcomes on AI platforms are not direct reproductions of the original data. Instead, these results undergo paraphrasing, wherein AI models process the data based on learned patterns and algorithms. This point is crucial indicating AI models not just replicate the data, but they generate novel outputs from the existing data. Hence, it is essential to understand where the data comes from and how it gets processed in the AI models.

As we navigate through this complex terrain, it is the need of the time for all the stakeholders to engage in meaningful dialogue, to confront these challenges with courage and integrity, and to find a solution toward a future where AI serves as a force for good, enriching research and development. Utilizing AI for expanding the boundaries of human knowledge and imagination is one of the vital aspects of training AI for a better world.

By looking at the current scenario around the usage of generative AI, what are your thoughts on the ethical dilemmas surrounding AI data usage in the publishing sector? Do you believe that collaborative efforts between publishers and AI developers can strike a balance between innovation and ethical responsibility? Or do you envision a colluded space where research integrity is a lost case? Let’s engage in a dialogue where each one of us plays an important role in creating a responsible AI-integrated space for medical and scientific communications and uphold the soul of authentic research and transparent process.

Introduction The landscape of medical writing is undergoing a significant transformation with the advent of artificial intelligence (AI). This pivotal shift is not just changing the way medical documents are created but is also redefining the roles and skills required of medical writers.

The landscape of medical writing is on the brink of a significant transformation, thanks to the advent of generative AI. This technology is not just a tool; it’s set to redefine what it means to be a medical writer.

Breast cancer remains one of the most significant health challenges for women worldwide. In 2022, it caused around 670,000 deaths

9 / 100 Powered by Rank Math SEO SEO Score “Write the paper as though no editor will ever see

9 / 100 Powered by Rank Math SEO SEO Score World Thyroid Day invites us to appreciate the tiny, butterfly-shaped

8 / 100 Powered by Rank Math SEO SEO Score In an era where speed, efficiency, and personalization have become

8 / 100 Powered by Rank Math SEO SEO Score Every 8 May, World Thalassemia Day shines an international spotlight on

Ovarian cancer remains one of the most challenging gynaecologic malignancies, often referred to as a “silent killer” due to its subtle onset and late-stage diagnosis.1 In 2022, 324,603 women worldwide were diagnosed with ovarian cancer.

12 / 100 Powered by Rank Math SEO SEO Score The healthcare landscape is being reshaped at an unprecedented pace,

7 / 100 Powered by Rank Math SEO SEO Score Biosimilars—biologic medicines that are highly similar to FDA-approved originator biologics—offer

12 / 100 Powered by Rank Math SEO SEO Score Communications In a rapidly evolving digital ecosystem, the pharmaceutical industry’s

6 / 100 Powered by Rank Math SEO SEO Score By Turacoz Healthcare Solutions | World Liver Day 2025 In

6 / 100 Powered by Rank Math SEO SEO Score The healthcare industry is experiencing a paradigm shift as patient

8 / 100 Powered by Rank Math SEO SEO Score In the evolving era of healthcare, data is the foundation

18 / 100 Powered by Rank Math SEO SEO Score In an era where healthcare decisions are increasingly driven by

7 / 100 Powered by Rank Math SEO SEO Score Colorectal cancer (CRC) is the third most commonly diagnosed form

11 / 100 Powered by Rank Math SEO SEO Score Real-world evidence (RWE) has emerged as a critical complement to

14 / 100 Powered by Rank Math SEO SEO Score Healthcare is a constantly evolving field, where innovation, research, and

13 / 100 Powered by Rank Math SEO SEO Score Chronic kidney disease (CKD) is often called ‘silent killer’ as

12 / 100 Powered by Rank Math SEO SEO Score Imagine a world where every woman has the power to

6 / 100 Powered by Rank Math SEO SEO Score Recently, while working on a project about preeclampsia during pregnancy,

79 / 100 Powered by Rank Math SEO SEO Score The pharmaceutical industry is undergoing a significant transformation. In the

75 / 100 Powered by Rank Math SEO SEO Score Introduction to Genitourinary Cancer Treatment Genitourinary (GU) cancers—including prostate, bladder,

79 / 100 Powered by Rank Math SEO SEO Score Medical writing demands utmost precision, as even a small error,

75 / 100 Powered by Rank Math SEO SEO Score In an extremely competitive market, it is a fact that

12 / 100 Powered by Rank Math SEO SEO Score Regulatory Changes in Medical Communications: Preparing for 2025 and Beyond

7 / 100 Powered by Rank Math SEO SEO Score Medical writing involves the expertise to craft the healthcare content

9 / 100 Powered by Rank Math SEO SEO Score Our recent poll revealed that Artificial intelligence (AI) integration is

16 / 100 Powered by Rank Math SEO SEO Score In the fast-evolving landscape of academic and medical publishing, journals

64 / 100 Powered by Rank Math SEO SEO Score Scientific advancement in academia depends greatly on research, but its

67 / 100 Powered by Rank Math SEO SEO Score In scientific research, precision and clarity are essential for ensuring

69 / 100 Powered by Rank Math SEO SEO Score Academic societies have long been integral to the advancement of

59 / 100 Powered by Rank Math SEO SEO Score In medical and scientific writing, effectively communicating complex information is

66 / 100 Powered by Rank Math SEO SEO Score The landscape of scientific publishing is transforming, driven by advances

58 / 100 Powered by Rank Math SEO SEO Score In the evolving medical research field, identifying unexplored areas and

69 / 100 Powered by Rank Math SEO SEO Score Are you finding it challenging to make your medical communications

64 / 100 Powered by Rank Math SEO SEO Score In the rapidly evolving academic publishing world, journal indexing plays

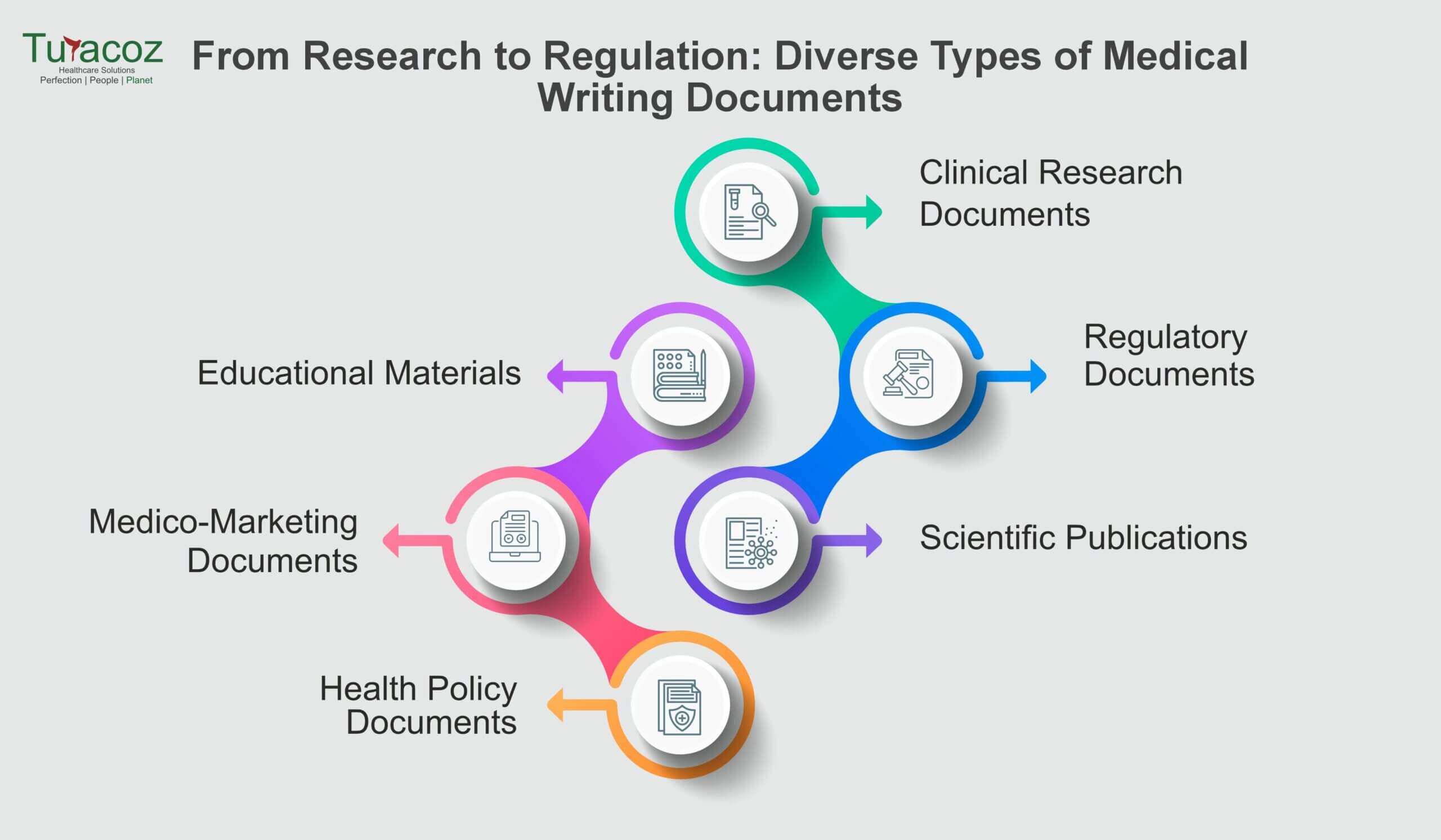

63 / 100 Powered by Rank Math SEO SEO Score Medical writing is an exciting and rapidly growing field that

54 / 100 Powered by Rank Math SEO SEO Score In the field of medical publishing, accuracy and precision are

63 / 100 Powered by Rank Math SEO SEO Score The journey from completing a research project to seeing your

63 / 100 Powered by Rank Math SEO SEO Score Academic publishing is undergoing a significant transformation, driven by technological

67 / 100 Powered by Rank Math SEO SEO Score In the rapidly evolving field of medical research, the application

63 / 100 Powered by Rank Math SEO SEO Score Scientific research dissemination has undergone a significant transformation in recent

65 / 100 Powered by Rank Math SEO SEO Score Journal metrics play a crucial role in evaluating the significance

59 / 100 Powered by Rank Math SEO SEO Score Artificial Intelligence (AI) and Machine Learning (ML) are revolutionizing various

68 / 100 Powered by Rank Math SEO SEO Score Scientific publishing is a cornerstone of academic and research progress

76 / 100 Powered by Rank Math SEO SEO Score The peer review process is a cornerstone of academic and

72 / 100 Powered by Rank Math SEO SEO Score Artificial Intelligence (AI) is rapidly transforming the healthcare industry, and

53 / 100 Powered by Rank Math SEO SEO Score In the rapidly changing domain of academic publishing, two distinct

60 / 100 Powered by Rank Math SEO SEO Score In the dynamic world of academia and medical research, effectively

71 / 100 Powered by Rank Math SEO SEO Score In the dynamic field of medical communication, managing a content

68 / 100 Powered by Rank Math SEO SEO Score For centuries, the world of academic publishing was dominated by

62 / 100 Powered by Rank Math SEO SEO Score Proofreading is the meticulous review of written content which is

62 / 100 Powered by Rank Math SEO SEO Score Publishing research is a significant achievement in an academic or

58 / 100 Powered by Rank Math SEO SEO Score The European Medicines Agency (EMA) has released a question-and-answer guidance

65 / 100 Powered by Rank Math SEO SEO Score Artificial Intelligence (AI) has already begun to revolutionize numerous industries,

55 / 100 Powered by Rank Math SEO SEO Score In healthcare, staying up-to-date with the latest research, guidelines, and

67 / 100 Powered by Rank Math SEO SEO Score Pharmaceutical companies frequently co-package European Conformity (CE) marked medical devices

67 / 100 Powered by Rank Math SEO SEO Score In the rapidly evolving landscape of healthcare and scientific research,

61 / 100 Powered by Rank Math SEO SEO Score In today’s fast-paced and ever-evolving healthcare landscape, effective communication is

64 / 100 Powered by Rank Math SEO SEO Score Whether you are an aspiring medical writer looking to kickstart

58 / 100 Powered by Rank Math SEO SEO Score Integrating artificial intelligence (AI) into medical communication can streamline processes,

58 / 100 Powered by Rank Math SEO SEO Score In the realm of medical communications, the ability to deliver

66 / 100 Powered by Rank Math SEO SEO Score Regulatory medical writing is a specialized field that involves developing

73 / 100 Powered by Rank Math SEO SEO Score Regulatory writing, a crucial skill in various industries, can be

60 / 100 Powered by Rank Math SEO SEO Score In the world of medical and scientific publishing, ghost-writing has

60 / 100 Powered by Rank Math SEO SEO Score In the intricate realm of medical communications, precision and clarity

65 / 100 Powered by Rank Math SEO SEO Score Medical marketing agencies play a crucial role in the healthcare

67 / 100 Powered by Rank Math SEO SEO Score In today’s fast-paced world, PowerPoint presentations have become indispensable tools

59 / 100 Powered by Rank Math SEO SEO Score Medical writing is a specialized discipline within the clinical research

61 / 100 Powered by Rank Math SEO SEO Score The complexity of research and the pressure to publish in

61 / 100 Powered by Rank Math SEO SEO Score The evolving landscape of healthcare and pharmaceutical industries has catalysed

64 / 100 Powered by Rank Math SEO SEO Score In the realm of healthcare, the demand for proficient medical

62 / 100 Powered by Rank Math SEO SEO Score In latest fast-paced and dynamic enterprise environment, corporations face a

54 / 100 Powered by Rank Math SEO SEO Score In the digital era, AI-driven chatbots have become ubiquitous, transforming

57 / 100 Powered by Rank Math SEO SEO Score Medico-marketing documents play a crucial role in healthcare marketing by

69 / 100 Powered by Rank Math SEO SEO Score The healthcare industry constantly evolves, with new treatments, technologies, and regulations

61 / 100 Powered by Rank Math SEO SEO Score In the digital age, where the internet serves as a

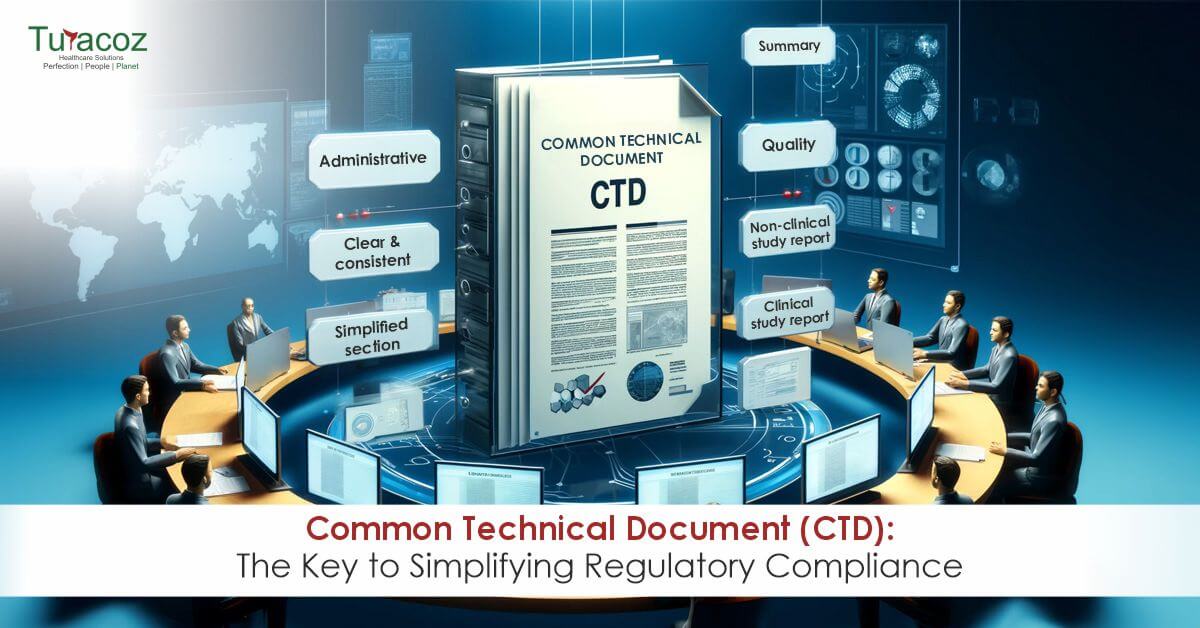

66 / 100 Powered by Rank Math SEO SEO Score Before the introduction of the Common Technical Document (CTD) in

55 / 100 Powered by Rank Math SEO SEO Score In the world of scientific research and communication, it is

66 / 100 Powered by Rank Math SEO SEO Score In clinical research, documenting results and findings is as important

64 / 100 Powered by Rank Math SEO SEO Score In scientific publishing, the accuracy and integrity of visual data

62 / 100 Powered by Rank Math SEO SEO Score In health and medical communication, understanding how to leverage media

62 / 100 Powered by Rank Math SEO SEO Score Developing new drugs and medical treatments is a testament to

61 / 100 Powered by Rank Math SEO SEO Score Scientific conferences stand as vibrant hubs of knowledge exchange, where

59 / 100 Powered by Rank Math SEO SEO Score In the ever-evolving landscape of cybersecurity, regularly updating software and

65 / 100 Powered by Rank Math SEO SEO Score The fusion of Artificial Intelligence (AI) with medical writing signifies

60 / 100 Powered by Rank Math SEO SEO Score The academic publishing industry, medical and scientific communication services have

70 / 100 Powered by Rank Math SEO SEO Score In the intricate world of medical research and publication, the

64 / 100 Powered by Rank Math SEO SEO Score Email authentication plays a crucial role in safeguarding your company

58 / 100 Powered by Rank Math SEO SEO Score In the fast-paced world of medical communication, where accuracy and

62 / 100 Powered by Rank Math SEO SEO Score Email remains one of the primary communication channels for businesses,

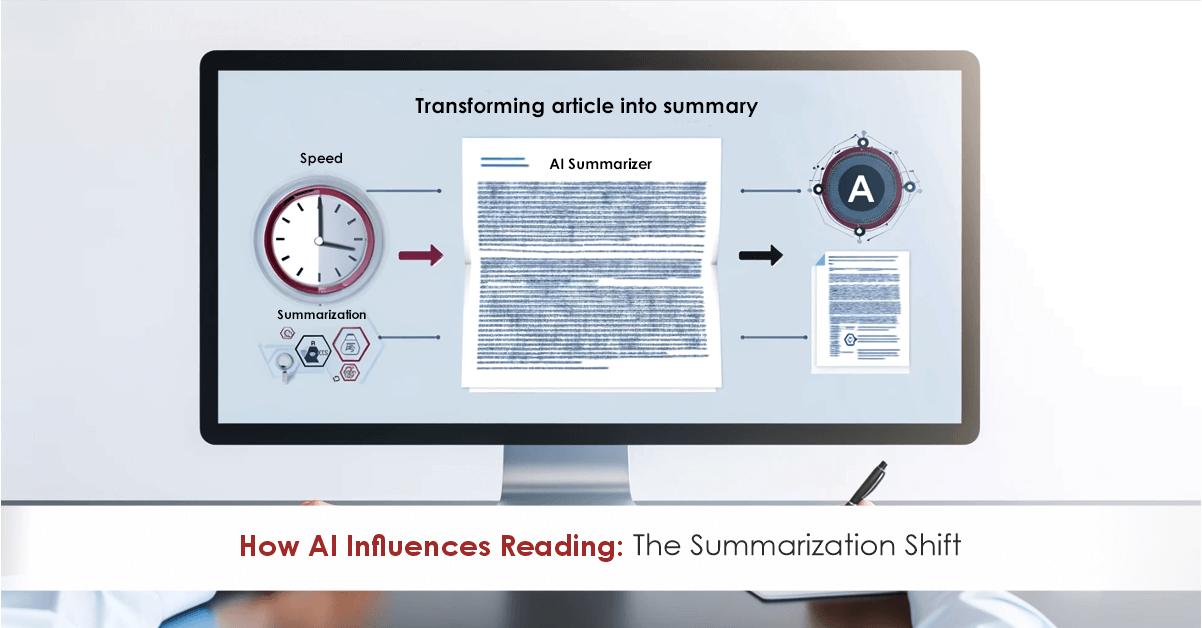

67 / 100 Powered by Rank Math SEO SEO Score The landscape of information sharing has seen a remarkable evolution.

66 / 100 Powered by Rank Math SEO SEO Score In a recent study published by the Journal of Librarianship

68 / 100 Powered by Rank Math SEO SEO Score In today’s digital landscape, protecting sensitive data and systems from

62 / 100 Powered by Rank Math SEO SEO Score In a recent and unprecedented event, the esteemed open-access journal,

65 / 100 Powered by Rank Math SEO SEO Score Medical writers find themselves at a critical juncture in the

65 / 100 Powered by Rank Math SEO SEO Score In today’s digital age, where cyber threats are on the

71 / 100 Powered by Rank Math SEO SEO Score In healthcare and medicine, the precision and clarity of information

68 / 100 Powered by Rank Math SEO SEO Score Healthcare research (Medical Research) and development is dynamic and staying

58 / 100 Powered by Rank Math SEO SEO Score Science often appears as a maze filled with complex terminologies

54 / 100 Powered by Rank Math SEO SEO Score In our interconnected and globalized world, phishing attacks via email

74 / 100 Powered by Rank Math SEO SEO Score In medical communications, the traditional reliance on text-heavy documents is

64 / 100 Powered by Rank Math SEO SEO Score The introduction of the ACCORD (ACcurate COnsensus Reporting Document) guidelines

72 / 100 Powered by Rank Math SEO SEO Score Medical writing plays a pivotal role in conveying medical information

69 / 100 Powered by Rank Math SEO SEO Score Medical writing serves as a crucial link in enhancing patient

ou cannot share a single post on social media without acknowledging its original creator. This is exactly how a citation works! Citations are a way to inform people about the source of the information while giving credit to the researchers. If we want to transport science from a journal to a layman, citations are the means for travelling.

“Content is the king”, they say. Keywords are the powerful weapons of your king. Words or phrases which represent your ideas or define the content in a manuscript are known as keywords. These act as context-specific probes helping researchers to find your manuscript better. The specific words that a researcher will write while looking through search engines, journals, indexing, etc.

66 / 100 Powered by Rank Math SEO SEO Score Visualization of data and information makes clinical research and science

Working within organized cabins, reporting to managers, and clocking in as per company norms: work culture has taken a leap beyond these precincts. “Opportunities are created by us”, as the saying goes. Freelance Medical Writing exemplifies this precisely. Undoubtedly, full-time jobs support a sense of structure, decorum and a running income, but they are not always conducive, especially for those who are unable to devote time to a nine-to-six job due to personal commitments. This is precisely where freelancing comes to rescue.

69 / 100 Powered by Rank Math SEO SEO Score Medico marketing and medical communications are adding a novel approach

69 / 100 Powered by Rank Math SEO SEO Score How to know if an article or a journal is

rs chosen by our healthcare workers. Nurses are the lifeline of our healthcare system. On duty, come rain or sunshine. A day without nurses in hospitals cannot be dreamt of because it is impossible and catastrophic.

Ideas and knowledge will be of no value if not transferred and communicated with the right words and some creativity. If you do not want blank faces staring back at you, it is important to put your message simply and innovatively. Presentations are a powerful way of unveiling valuable information, scientific research, training peers, etc. and keeping the audience engaged at the same time. But what makes a scientific presentation weight heavier than any other? The value of a well-designed presentation is neglected in the medical world.

You will not ring the bell of a home where you are welcomed without a smile. This is the simplest description of client servicing. Be it a street eatery or a multinational company, they are running fundamentally on two core aspects: Quality and Service.

Holding the baby for long hours, cleaning poop with a smile on face, singing lullabies relentlessly, spending sleepless nights are the most common scenes of new motherhood. But what is not seen are the collateral surges of emotional drain-outs, restlessness, insomnia, anxiety, panic, bouts of despondency and detachment. While the former is normal, the latter is compelled to be normalized.

Digitalization has recalibrated healthcare to new heights. It is imperative for healthcare professionals and medical students to stay abreast with scientific and clinical advancements, but ironically the rush hours of duty leave them very little room and time for it. Nevertheless, newer technological options have widened the windows to healthcare communications and one such innovation is the emergence of Podcasts.

Fingers constantly working on laptops, eyes lost in the starlight of the screen, and mind battling

with anger, fear, anxiety, judgments, and pressure to win the race! This is the image of a modern

human working to earn a little more and finding peace a little less. The struggle of the mind

against the world has led to an increase in mental health issues.

As it brings forth equally-chances and struggles, opportunities, and failures, wishes and disappointments, it becomes imperative to choose our area of focus, wisely.

From the corridors of laboratories, science has found its way to our mobile screens. Digital media and social apps have pulverized the boundaries between a published paper and the public. Today a scientist can communicate and share his progress with everyone on the planet irrespective of his background. During the pandemic, Twitter brought an evolution in the minds of people about research and science.

“What is the side effect of paracetamol? Why can’t my kid get vaccinated? Which vaccine is more effective?” The patients and worried parents were looking all over on internet to treasure trove the answers to these questions. In such a scenario and many of its likes, Google becomes the go-to database for information, but it, unfortunately, lead to misinformation, misdiagnosis, spurious remedies, all heading to graver damage to health.

Needles, pills, liquid diets, and a room with the daunting odor of disease, are things a cancer patient is never devoid of experiencing. Cancer is a disease that takes a human down physically and mentally; a disease in which your own body cells refuse to listen to you. This metastasizing monster has its claws all over the globe and the entire healthcare community is leaving no stones unturned to find that perfect cure.

“We are sorry to inform you that your submission is rejected”- This is something you never want to hear or read but this is most often experienced. These words are disheartening. When we start research, it becomes a dream to see that work turning into pages of a journal.

She was unfamiliar with the bacteria inside her and hence even after taking Multi-Drug- Therapy she didn’t eat or sleep with her children for more than four years. The universe might be testing her as she lost her husband during this span of her life. She was denied her rights towards her husband’s estate.

83 / 100 Powered by Rank Math SEO SEO Score Introduction to Medical Writing Medical writing involves producing clear, precise

In recent times, Breast Cancer has been one of the predominant cancers. How predominant? Let’s have a look at the numbers. A total of 7.8 million women were diagnosed worldwide within the 5-year period according to the WHO (World Health Organization) statistics reported at the end of 2020.

64 / 100 Powered by Rank Math SEO SEO Score What is artificial intelligence (AI)? As per the Merriam Webster

feeds. However, have you ever questioned yourself why there is a sudden surge in opting for these diets? Where did we all go wrong in making diet choices? Are these diets necessary for everyone? The answer is NO.

Polycystic Ovary Syndrome (PCOS), also known as Polycystic Ovarian Disease (PCOD) is one of common hormonal syndrome that women blame for their weight gain. The cause of PCOS is still unknown, but studies have shown that it may involve a combination of genetic and environmental factors (like lifestyle, pollution).

According to the US Food and Drug Administration (FDA), a ‘device recall’ is defined as, “when a manufacturer takes a correction or removal action to address an issue with the medical device that violates the FDA law”1.

Diabetes Mellitus (DM) is an endocrine metabolic disorder, characterized by elevated blood glucose level. DM is sub-classified into following categories.

Coronavirus disease 2019(COVID-19) is giving the world a rollercoaster ride with shoot-up in infection cases globally. With more than 14 crores of infection cases worldwide in 16 months, the world is looking up to a miracle to stop this pandemic. One way of controlling the spread is to get vaccinated. More than 98 crores vaccines have been administered globally till the end of April 2021 and it is still making way to reach people in every corner of the world. India is in its second wave of COVID-19 infection with a tremendous rise in corona infection cases compared to the first wave of infection. But a positive side of this is, the largest vaccination drive of the world is under progress in India and giving positive hope for Indians to overcome this situation.

Class I devices are the lowest risk medical devices. However, the manufacturers of these devices also need to act immediately to comply with the new European Union (EU) Medical Devices Regulation (MDR); otherwise, they risk being unable to place their devices on the EU market after May 26, 2021.

Sleep is an important part of our day-to-day life and everybody long for a good night’s sleep after a day’s work. But do you know why you sleep at night and are awake during daytime? Do you know we have a biological clock inside us? Do you know why some people fall asleep while talking or even while driving? If you want to know the answers to these questions, please read on.

53 / 100 Powered by Rank Math SEO SEO Score What is pain? Pain can be a displeasing and uncomfortable

70 / 100 Powered by Rank Math SEO SEO Score Working from home comes with its own set of pros

69 / 100 Powered by Rank Math SEO SEO Score Background According to European Commission a web-based portal EUDAMED is

53 / 100 Powered by Rank Math SEO SEO Score As the Medical Device Regulation (MDR) deadlines are approaching for

The COVID-19 pandemic has overwhelmed our healthcare delivery systems and has effected the delivery of medical care across the spectrum. Medical device companies have also been hit by the crisis

as they struggle to make decisions regarding supply chains, and regulatory obligations in the midst of uncertainty.

Please find our blog discussing the impact of the pandemic on medical device regulations.

67 / 100 Powered by Rank Math SEO SEO Score Background Writing is a medium of human communication to express

It has been weeks and months since we have stopped counting days being in this pandemic-driven lockdown. There is a unique quality to this day-by-day pandemic despair; this quarantine depression is edging humans into physical and mental stagnation. According to an article I read in the Hindu couple of days back, approximately 12.2 crore Indians lost their jobs during the coronavirus lockdown in April 2020 only, which makes COVID 19 pandemic much more stressful for people.

65 / 100 Powered by Rank Math SEO SEO Score Good Clinical Research Practice (GCP) is an established international ethical

53 / 100 Powered by Rank Math SEO SEO Score Medicine is an ever-changing science. As new researches and clinical

Cancer refers to a medical condition characterized by uncontrolled division of cells forming tumor. During normal cell cycle, the cells grow, divide and die and new cells take their place, whereas in cancer, the abnormal cells continue dividing and do not die.

The gift of blood is the gift of life. There is no other substitute for human blood. According to statistics, every two seconds someone is in dire need for blood. And only one pint of blood can save up to three lives. Data collected over a span of many years suggests that the blood type most often requested by hospitals is Type O.

The world’s leading public health challenge is the HIV virus that leads to AIDS. In 2018, around 37.9 million people were infected with HIV/AIDS and approximately 1.7 million more joined the club worldwide.

64 / 100 Powered by Rank Math SEO SEO Score The human immunodeficiency virus (HIV) invades the immune system and

73 / 100 Powered by Rank Math SEO SEO Score The campaign “World Diabetes Day (WDD)” was launched in 1991

79 / 100 Powered by Rank Math SEO SEO Score According to statistics by the WHO, “The number of people

59 / 100 Powered by Rank Math SEO SEO Score Disease management programs (DMPs) are defined as “structured treatment plans

67 / 100 Powered by Rank Math SEO SEO Score Breast cancer is a growing concern as it is reported

68 / 100 Powered by Rank Math SEO SEO Score What are opioids? Opioids (Figure 1) are a class of

52 / 100 Powered by Rank Math SEO SEO Score Economic development of the people has improved their lifestyle. But

64 / 100 Powered by Rank Math SEO SEO Score The term ‘pharmacovigilance’ was coined in the mid 70’s by

65 / 100 Powered by Rank Math SEO SEO Score Introduction A biosimilar product, as defined by USFDA, is “a

69 / 100 Powered by Rank Math SEO SEO Score Peer Review is the process of evaluation of manuscripts submitted

80 / 100 Powered by Rank Math SEO SEO Score Osteoporosis is a silent age-related skeletal disease characterized by loss

64 / 100 Powered by Rank Math SEO SEO Score The month of May is commemorated as Cystic Fibrosis Awareness

59 / 100 Powered by Rank Math SEO SEO Score Most of us have possibly used it or sprinkled on

63 / 100 Powered by Rank Math SEO SEO Score Diagnostics play a very important role in our everyday life,

59 / 100 Powered by Rank Math SEO SEO Score The regulatory authorities have been on fire lately. They are

66 / 100 Powered by Rank Math SEO SEO Score Effective from March 19, 2019, The New Drug and Clinical

59 / 100 Powered by Rank Math SEO SEO Score Women Innovation and Entrepreneurship Foundation (WIFE) had conducted the second

74 / 100 Powered by Rank Math SEO SEO Score In the clinical trials industry, 80% of trials do

63 / 100 Powered by Rank Math SEO SEO Score Introduction Financial disclosures enable the readers to evaluate the potential

59 / 100 Powered by Rank Math SEO SEO Score The global statistics for HIV/AIDS 2017 have revealed that around

60 / 100 Powered by Rank Math SEO SEO Score Ever since HIV/AIDS is discovered, there have been lots of

54 / 100 Powered by Rank Math SEO SEO Score What are orphan drugs? The term orphan drug refers to

55 / 100 Powered by Rank Math SEO SEO Score Not everything sugar is good for you! The overdose of

64 / 100 Powered by Rank Math SEO SEO Score The month of October is dedicated to breast cancer to

69 / 100 Powered by Rank Math SEO SEO Score Publication planning is that part of the pharmaceutical landscape that

71 / 100 Powered by Rank Math SEO SEO Score Breast cancer is the most prevalent form of cancer in

57 / 100 Powered by Rank Math SEO SEO Score With the advances in medical technology and innovations in field

64 / 100 Powered by Rank Math SEO SEO Score Rabies, a viral disease that is mainly transmitted by an

68 / 100 Powered by Rank Math SEO SEO Score It is estimated that nearly 44 million people worldwide have

59 / 100 Powered by Rank Math SEO SEO Score You might wonder about the title of this article, figuring

59 / 100 Powered by Rank Math SEO SEO Score Suicide has become the 3rd highest reason for deaths today

61 / 100 Powered by Rank Math SEO SEO Score Managing a team to success requires more than just simply assigning tasks

53 / 100 Powered by Rank Math SEO SEO Score When a device seems to fit onto the definitions of

54 / 100 Powered by Rank Math SEO SEO Score The World Senior Citizens Day is observed on 21st August

78 / 100 Powered by Rank Math SEO SEO Score What is an Original Research Article? Original research articles, the

61 / 100 Powered by Rank Math SEO SEO Score “Are you into healthcare advertising?” “Yes!” “Oh! It involves so

69 / 100 Powered by Rank Math SEO SEO Score With the World Breastfeeding Week (1st Aug-7th Aug) towards its

63 / 100 Powered by Rank Math SEO SEO Score “Feedback is the key to improvement.” I wish giving

64 / 100 Powered by Rank Math SEO SEO Score On 6th July 2018, Turacoz Healthcare Solutions organized an

71 / 100 Powered by Rank Math SEO SEO Score Health economics and outcomes research (HEOR) activities comprise of Pharmacoeconomics

63 / 100 Powered by Rank Math SEO SEO Score People have argued about the concept of “Work-Life Balance” for

58 / 100 Powered by Rank Math SEO SEO Score The medical device industry is undergoing a surge in growth,

66 / 100 Powered by Rank Math SEO SEO Score With the years passing, International Yoga Day, has received

65 / 100 Powered by Rank Math SEO SEO Score A research publication is considered as the highest-level medium of

57 / 100 Powered by Rank Math SEO SEO Score On May 2018, Turacoz Skill Development Program (TSDP), a wing

65 / 100 Powered by Rank Math SEO SEO Score Turacoz conducted a workshop on “Literature Search and Lifecycle of

60 / 100 Powered by Rank Math SEO SEO Score India – Turacoz Skill Development Program (TSDP) is an initiative

54 / 100 Powered by Rank Math SEO SEO Score Acceliant, the global leader in e-clinical data management solutions, has

56 / 100 Powered by Rank Math SEO SEO Score Turacoz Healthcare Solutions, a medical communications company provides customized and

54 / 100 Powered by Rank Math SEO SEO Score Women are pivotal contributors to society in their roles as

52 / 100 Powered by Rank Math SEO SEO Score World No Tobacco Day (WNTD) is celebrated every year on

58 / 100 Powered by Rank Math SEO SEO Score What is graphical abstract? Graphical abstract is a concise and

60 / 100 Powered by Rank Math SEO SEO Score Our current lifestyle revolves around our workplace duties and responsibilities.

57 / 100 Powered by Rank Math SEO SEO Score “Ready to Beat Malaria” World Malaria Day has become a

57 / 100 Powered by Rank Math SEO SEO Score Global Market Scenario for Orphan Drugs Orphan drugs are the

56 / 100 Powered by Rank Math SEO SEO Score Introduction Medical device is an essential part of healthcare system.

60 / 100 Powered by Rank Math SEO SEO Score Introduction Drug regulations can be defined as the overall control

75 / 100 Powered by Rank Math SEO SEO Score Over and over we keep hearing about how employees want

53 / 100 Powered by Rank Math SEO SEO Score What is a Rare Disease Rare disease refers to a

60 / 100 Powered by Rank Math SEO SEO Score Rare Disease Day On the 28th of February, rare disease

70 / 100 Powered by Rank Math SEO SEO Score Work-From-Home (WFH) is one of the main argument topics lately.

58 / 100 Powered by Rank Math SEO SEO Score Medical devices comprise of a vast range of equipment, ranging

63 / 100 Powered by Rank Math SEO SEO Score Clinical Evaluation Report for Medical Devices Clinical evaluation report (CER)

61 / 100 Powered by Rank Math SEO SEO Score Global Cancer Diagnostics Market The global cancer diagnostics market was

52 / 100 Powered by Rank Math SEO SEO Score Cancer is the second most common cause of death after

53 / 100 Powered by Rank Math SEO SEO Score Healthcare systems are driven by regulations which ensure patient’s access

70 / 100 Powered by Rank Math SEO SEO Score Evidence-based practice (EBP) is defined asthe conscientious, explicit and judicious

64 / 100 Powered by Rank Math SEO SEO Score Mergers and acquisitions (M&As) refer to consolidation of companies or

59 / 100 Powered by Rank Math SEO SEO Score The term diabetes mellitus is defined as a metabolic disorder

55 / 100 Powered by Rank Math SEO SEO Score When the balance of body’s metabolic processes is disrupted, certain

60 / 100 Powered by Rank Math SEO SEO Score Turacoz represented in 13th Pharmacovigilance Conference in Chicago (27-28 Sep)

54 / 100 Powered by Rank Math SEO SEO Score Alzheimer’s Disease (AD) is emerging as one of the critical

56 / 100 Powered by Rank Math SEO SEO Score Healthcare professionals are expected to provide the best evidence based

57 / 100 Powered by Rank Math SEO SEO Score We all are aware of the different types of publication

56 / 100 Powered by Rank Math SEO SEO Score The Indian healthcare industry is on a high growth trajectory

53 / 100 Powered by Rank Math SEO SEO Score Oral health is an integral part of our general health,

69 / 100 Powered by Rank Math SEO SEO Score Nations around the globe celebrate World Blood Donor Day (WBDD),

67 / 100 Powered by Rank Math SEO SEO Score IMRAD is nothing but the acronym used for the 4

61 / 100 Powered by Rank Math SEO SEO Score A yearly celebration since 1987, ‘World No Tobacco Day’ observed

59 / 100 Powered by Rank Math SEO SEO Score World Health Organization (WHO) celebrates 7th of April every year,

58 / 100 Powered by Rank Math SEO SEO Score World Down Syndrome Day (WDSD) is a global awareness day

64 / 100 Powered by Rank Math SEO SEO Score A lot of talking about Mediterranean food and its benefits

59 / 100 Powered by Rank Math SEO SEO Score 5 Medical Writing Tips for Novice Writers Always start with

58 / 100 Powered by Rank Math SEO SEO Score Know About the DASH Plan “DASH” or the Dietary Approaches

54 / 100 Powered by Rank Math SEO SEO Score Under the aegis of Turacoz Healthcare Solutions, Turacoz Skill Development

64 / 100 Powered by Rank Math SEO SEO Score Facts Approximately 83% of liver cancer cases are diagnosed in

58 / 100 Powered by Rank Math SEO SEO Score “Love Your Bones and Protect Your Future” World Osteoporosis Day

59 / 100 Powered by Rank Math SEO SEO Score Desktop dining has now become a common phenomenon with approximately

58 / 100 Powered by Rank Math SEO SEO Score October is the “Breast Cancer Awareness Month”. Breast cancer is

51 / 100 Powered by Rank Math SEO SEO Score Cardiovascular diseases (CVDs) are a group of disorders of the

56 / 100 Powered by Rank Math SEO SEO Score This year marks the 10th World Rabies Day, which has

55 / 100 Powered by Rank Math SEO SEO Score Turacoz is a medical communications company providing customized and cost-effective

55 / 100 Powered by Rank Math SEO SEO Score Editing is an important task that is performed by an

59 / 100 Powered by Rank Math SEO SEO Score Medical writing is a multidimensional profession which requires creating numerous

55 / 100 Powered by Rank Math SEO SEO Score What is Triclosan? Triclosan is commonly used as a disinfectant

66 / 100 Powered by Rank Math SEO SEO Score Being a medical writer has a lot of allure, particularly

53 / 100 Powered by Rank Math SEO SEO Score As the cool showers of monsoon bring relief after the

65 / 100 Powered by Rank Math SEO SEO Score Disturbed sleep and strained red eyes seem to take a

52 / 100 Powered by Rank Math SEO SEO Score Viral hepatitis, an inflammatory liver disease is commonly caused by

58 / 100 Powered by Rank Math SEO SEO Score Introduction Dehydration is a condition where free water loss exceeds free

55 / 100 Powered by Rank Math SEO SEO Score Turacoz attended 6thAisa- Pacific Pharma congress organized by Omics International

58 / 100 Powered by Rank Math SEO SEO Score Humans were designed to move around and stay physically active.

Diabetes mellitus (DM) has become one of the most challenging health problems in the 21st century. It is a serious

Helen Adams Keller (June 27, 1880 – June 1, 1968) was an American author, political activist, and lecturer. She was

It’s again Ramadan time. Millions of Muslims fast during this period and abstain from eating and drinking. However, two main

Turacoz Healthcare Solutions, a medical communications company will be attending 6th Asia-Pacific Pharma Congress where, Dr. Namrata Singh, director of

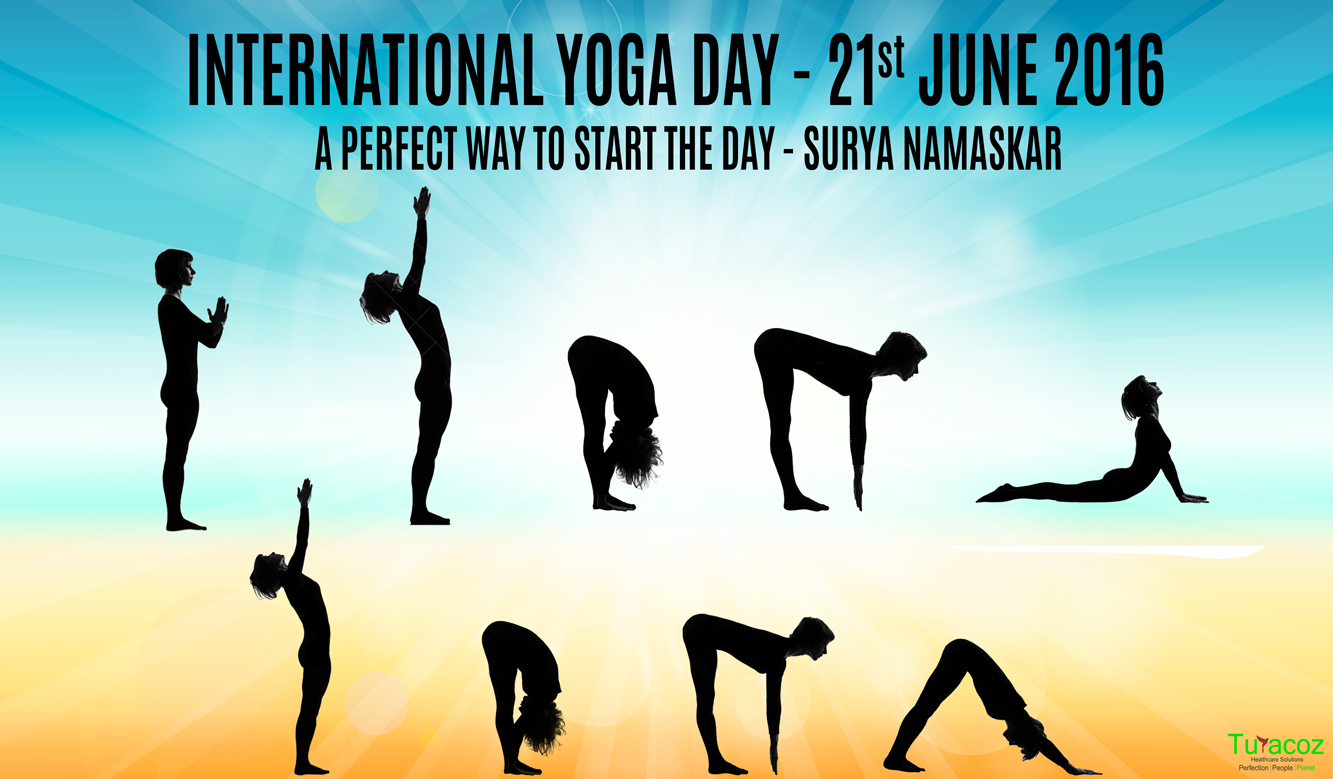

Surya Namaskar or sun salutation is a way of saluting sun, the ultimate source of energy and life on earth.

Men’s Health Month is a special awareness period for men’s health observed across the globe. It was passed by Congress

Ramadan is a holy month for Muslims in which consumption of food and drinks, medications, and smoking is forbidden between

Turacoz skill development program conducted a medical writing workshop on publication at Tata memorial hospital, Mumbai on 4th June,2016. The

Turacoz skill development program conducted a seminar on 02 June, 2016 in Center for Faculty Development (Faculty of Pharmacy), Jamia

It’s not just a pain.It’s a complete physical, mental, and emotional assault on your body. -Jamie Wingo May is declared

Turacoz Skill and Development program under the umbrella of Turacoz Healthcare Solutions successfully organized its second Medical Writing workshop in

The month of May is declared as “National Asthma and Allergy Awareness Month” by Asthma and Allergy Foundation of America.

“For Hepatitis, Prevention is the Best Intervention” The month of May is titled as “Hepatitis Awareness Month”, as a proactive

Malaria is a serious life-threatening parasitic disease caused by parasites known as Plasmodium vivax (P.vivax), Plasmodium falciparum (P.falciparum), Plasmodium malariae

Turacoz Healthcare Solutions conducted a medical writing workshop on 16th April, 2016 at Country Inn and suites by Carlson, Bangalore.

Let’s Join Hands to Fight Against Hemophilia Hemophilia is one of the oldest known genetic bleeding disorder which is caused

The Department of Pharmaceuticals (DoP), Government of India, released Uniform Code of Pharmaceuticals Marketing Practices (UCPMP) on 1st January 2015.

Autism is a serious, lifelong developmental disability characterized by considerable impairments in social interactions and communication skills, as well as

World Parkinson’s Disease Day: 11th April, 2016: World Parkinson’s disease day is celebrated every year on 11th April to commemorate

Tuberculosis (TB) is an infectious disease caused by the bacillus Mycobacterium tuberculosis. It usually affects the lungs (pulmonary TB), but

Overview : Colorectal cancer is the abnormal growth of cells in the colon or rectum (parts of the large intestine) that

World Glaucoma Week (March 6-12, 2016) : Be Informed, Be Safe Each year the World Glaucoma Association (WGA) and the

World Kidney Day (WKD) is a joint initiative of the International Society of Nephrology (ISN) and the International Federation of Kidney

Turacoz conducted a seminar on Medical writing as a career for over 125 students with Biotechnology and Life sciences background

Turacoz conducted a Medical writing workshop on February 16th, Tuesday at Radisson Blu GRT, Chennai. It is one of the

Turacoz’s Medical Director, Dr. Namrata Singh was present at the InnoHealth conference held on February 5th at PHD house, Chamber

Turacoz Healthcare Solutions conducted a Medical Writing Workshop in Dubai on 30th January 2016 at Radisson Blu Downtown. The participants

Arab Health Congress is one of the biggest, largest and most extravagant confluence for the pharmaceutical and healthcare professionals. It

February 12 is declared as Sexual and Reproductive Health Awareness Day annually. This day provides an opportunity to raise awareness

Air pollution is the introduction of chemicals, particulate matters or biological materials in air, for a sufficient time that causes

What is a Publication? Publications are a healthcare solution which bridges communication of research conducted to the researchers around the

“Anyone who stops learning is old, whether at twenty or eighty. Anyone who keeps learning stays young. The greatest thing

Practice Flawless English for Good Scientific Writing Knowledge of grammar is one of the keys to writing clearly and credibly.

It is believed that around 1920, a deadly virus crossed species from chimpanzees to humans in Kinshasa (Africa), and led

World AIDS Day, 1 Dec was first declared by the World Health Organization and the United Nations General Assembly in

‘Medical writing’ is a perfect amalgamation of science and art. As a medical writer, one has to maintain a good

As described by the WHO, immunization is the process whereby a person is made immune or resistant to an infectious

Over the years there are many technological advancements that came into light in the treatment of diabetes. These technological advancements

Clinical studies are conducted to test the drugs and medical devices in humans before their approval and availability for the

Reduce your risk today: Eat healthy, Walk more and Weigh less Lifestyle modification for prevention of diabetes mellitus Structured programs

Publishing the results of a study completes the research conducted. As mentioned by EH Miller, “If it was not published,

Knocking out Triple Negative Breast Cancer: A new paradigm in treatment Triple negative breast cancer (TNBC) are the subtypes of

Liver cancer: Treatment Liver cancer treatment is generally based on the stage of disease and the patient response to treatment.

Since 1997, October 20, is observed as the “World Osteoporosis Day” for raising global awareness on the prevention, diagnosis and

Protect yourself from Breast Cancer Over last ten years or so, breast cancer is the most common cancer in most

What is liver cancer or hepatic cancer? Liver is the largest internal organ in the body. It is essential for

“A heart for life” World Heart Day (sponsored by World Heart Federation) was founded in 2000, a biggest intervention against

September is Prostate Cancer Awareness month Globally, prostate cancer is the second most frequently diagnosed cancer in men and the

World contraception day (WCD) was first conceived in the year 2007 by 10 international family planning organizations in order to

Understanding environmental health The International Federation of Environmental Health, better known by its acronym IFEH, works to impart knowledge regarding

Newborn screening test (also known as Guthrie test) is one of the successful innovations in the modern era. It is

Fast Facts 2 A genetic disease, resulting from environmental (gluten) and genetic (HLA and non-HLA genes) factors. Estimated 1 %

Attempting Suicide and having suicidal tendency are the most extreme form of self-harming behaviour exhibited by humans. Centre for Disease

Psoriasis is not all of you, it is just a part of you like everything else Psoriasis is generally classified

Dr. Namrata’s mantra for life is “nothing is impossible”. Her experience of 10 years as a pediatrician and 9 years

Today on Organ Donation day, Turacoz team members pledge their organs for a noble cause and want to contribute to

“Once you choose hope, anything’s possible.” Christopher Reeve Sarcoma, may be defined as “a malignant tumor of connective or other

The 5 tips for desktop workers are absolutely essential to maintain and good health and have a long innings professionally.

Ramadan is a lunar based fasting month for Muslims. Muslims who fast during this time should refrain from eating, drinking,

Sarcomas are the tumors originating from mesenchyme and contribute to about 20% of all pediatric solid malignant cancers and less

Yoga has been an integral part of India since Indus Saraswati civilization for about 5000 years now. With time the

Asthma is a worldwide disease affecting an estimated 300 million individuals globally. Some authors also reported prevalence of Asthma as

“In three words I can sum up everything I’ve learned about life: it goes on.” ― Robert Frost Hemophilia- a

Duis ornare, est at lobortis mollis, felis libero mollis orci vitae dictum lacus quis neque lectus vel neque. Aliquam ultrices erat lobortis.

Duis ornare, est at lobortis mollis, felis libero mollis orci, vitae congue neque lectus vel neque. Aliquam ultrices erat.

Partner with Turacoz to bring science to life through strategic and evidence-based communication.